What roles do you need on a team running AI-driven QA?

Dmitry Reznik

Chief Product Officer

Summarize with:

The use of GenAI and analytical AI is still gaining ground: More than three-quarters of those surveyed by McKinsey now say their companies use AI in at least one business function.

But there is also the UK’s Department for Work and Pensions. They quietly gave up at least six AI prototype projects: jobcentre staff learning tools, disability benefit processing tools, and others. The Department reps cited persistent “frustrations and false starts” and the inability to make the systems “scalable, reliable and thoroughly tested”.

Irony aside, AI can automate tests, but it can’t replace the essential human roles: people who select what AI should test, validate its output, and align the logic with business risk.

Artificial intelligence finds defects faster than humans, but without a modern QA team that understands how to train and direct the model under the hood, the tool becomes a noisy alarmist rather than a force multiplier.

With the rapid escalation of this technology, it’s worth rethinking the QA team structure for AI. It can autonomously scan apps and how users interact with them, generate test scenarios, and self-heal broken scripts.

What it can’t do is to decide which tests matter most for you, interpret ambiguous results, or align output with business risk on its own. We expect this issue to be fixed in a couple of years… or not. But at the moment, the main gap is in the human architecture around modern tools.

So, that’s why we wrote this article — to close the gap to.

How AI is changing the makeup of QA teams

In 2025, the rule of thumb (probably) looks like, “Whichever roles in AI testing you have, each team member should deeply understand the ABCs of LLMs and artificial intelligence work.” Because only skilled teams can direct and validate its use.

Testers to test strategists shift

Traditional testers have been writing step-by-step scripts. With AI generating and maintaining baseline tests, they are shifting into higher-value work. Human expertise also shifts its focus:

- They decide which risk areas AI should cover first (but AI can prioritize tests as well after initial training and two to three learning runs)

- Humans better understand release goals, so they better design coverage strategies

- They review and approve AI-generated scripts before full integration

Expedia Group scaled AI testing with autonomous tests handling repetitive booking workflows. They have built more than 300 ML models to cover internal and customer-facing services.

At the same time, their AI-powered QA team focused on edge-case coverage in payments and loyalty programs. Eventually, they increased incremental transactions by 10%, reached USD 6B of incremental revenue for their partners, and a 65% satisfaction rate as per customer surveys (satisfaction with inclusive AI features within the app; speaking from experience, not a bad rate).

Enhancement of development and ops teams

Traditional test automation is still siloed and mainly isolated from non-tech teams. To leverage non-techies’ experience and get the most out of AI tools, you need:

- Stable and observable environments: Log completeness, network monitoring, and error tracking.

- Access to development metadata: Code diffs, feature flags, and schema changes that help AI adapt.

- Ops integration: Tests run at the right stage in CI/CD, avoiding excessive false failures “noise”.

This way, you are redesigning your entire development workflow: QAs collaborate more directly with developers, DevOps engineers, and non-tech leadership.

The need for new skills

If your team can only write Selenium or Cypress scripts, your transformation is doomed to failure.

What also matters now:

- Interpretation skills: Understanding probabilistic AI outputs that are beyond pass/fail marks.

- Data literacy: Knowing how to evaluate logs, training data, and coverage reports that drive AI decisions.

- Platform orchestration: Configuring AI test runners, scaling them across threads, and ensuring integration with observability tools.

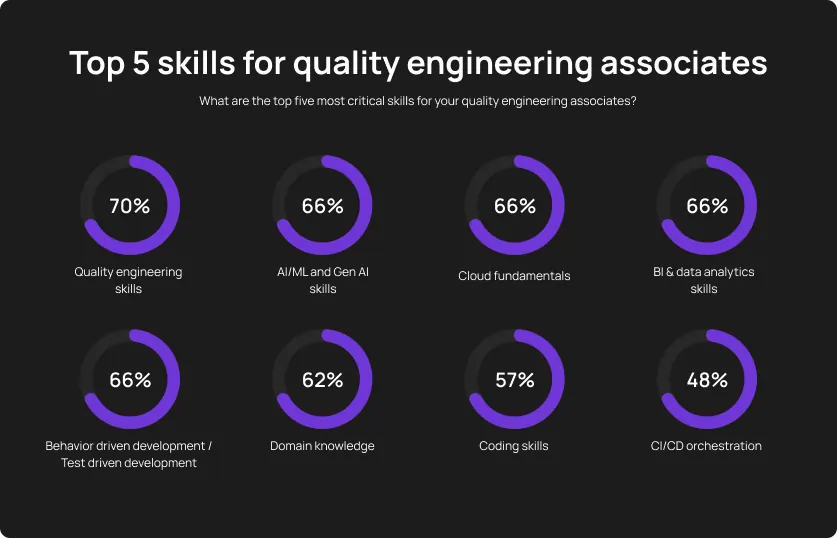

The World Quality Report 2024-25 notes data proficiency and AI/GenAI skills as core to the modern QA competency set. The tester of tomorrow is closer to a data-savvy strategist than a script-writing executor.

Core roles for an AI-powered QA team

To run and scale AI in QA tools effectively, teams need mutually complementary roles with distinct responsibilities, flexible skills, and a clear fit in the workflow. Let’s name the most necessary ones.

QA Automation Engineer / SDET

- Responsibilities: Integrates AI tools into existing frameworks, validates AI-generated scripts, keeps a CI/CD pipeline reliable.

- Skills: Knows automation frameworks (e.g., Selenium, Playwright, Cypress) inside out, debugs auto-generated code if needed, has can-do skills in API testing.

- Workflow fit: They distinguish executable AI’s technical output from trashy ones, connecting the reliable AI solution with the engineering pipeline.

Test Strategist or QA Lead

- Responsibilities: Runs test strategy, prioritizes high-risk coverage (or checks if AI’s prioritization really works), aligns test output with release criteria.

- Skills: Risk-based testing, requirements analysis, KPI setting, stakeholder communication, and expectation management.

- Workflow fit: This position actually directs AI: determines the hot spot for AI’s entrance, develops the testing process, and mainly answers the question, “Why you test?”. This is a Test Strategist who ensures your automated coverage delivers business value.

AI QA Analyst / Test Analyst

- Responsibilities: Interprets test tool outcomes, spots anomalies and false positives/negatives, confirms whether AI’s self-healing works as intended.

- Skills: Data analysis, log parsing, convergent thinking, core testing framework orchestration to timely discover anomalies.

- Workflow fit: They are the quality auditors of AI, guaranteeing the technology works properly.

DevOps Engineer / CI/CD Specialist

- Responsibilities: Matches specific tests to the right pipeline stages, manages cloud execution and parallelism, and makes everything observable.

- Skills: Jenkins, GitLab CI/CD, Kubernetes, Docker, cloud execution services, monitoring stacks.

- Workflow fit: Without such specialists, you can’t gather a genuine AI test automation team. They integrate AI tests into the delivery flow, prevent bottlenecks and pipeline instability.

Product-Aware Quality Advocate

- Responsibilities: Ensures real user journeys and business-critical scenarios are considered before scaling AI in QA tools.

- Skills: Deep product/domain expertise and understanding of user journey, exploratory testing.

- Workflow fit: They make sure your team doesn’t waste effort and resources on irrelevant edge cases with low business value.

AI Champion or Enablement Lead (optional in larger teams)

- Responsibilities: Owns the entire AI adoption strategy, spots a lack of knowledge in specific teams and closes this need with training, builds playbooks, and aligns cross-functional teams.

- Skills: Change management, cross-team facilitation, QA tool configuration, metrics set-up.

- Workflow fit: Ensure AI testing is a sustainable and effective capability for your company.

Key collaboration models that make AI QA work

In 2019, MIT stated that 70% of AI projects delivered little to no value. Now, this number is even higher (about 85%). But Google, Meta, and other behemoths are still investing hard in artificial intelligence.

That’s because they know the lion’s share of AI adoption depends on humans. And Harvard Business Review notes that only 25% of those surveyed are confident in their data skills (while about 90% of business owners named data as the cornerstone for their operations and, eventually, success).

The conclusion is as simple as pie: invest your time and effort in building a genuine AI-powered QA team, skilled, confident, and a bit Walter Mitty-minded. Here is what it should look like.

How to build or evolve your team for AI QA

The main trap is to think it’s a hiring problem. We do think it is rather an orchestration problem. The fastest wins come from evolving the skills, workflows, and tools you already have.

Step 1: Assess your current roles and responsibilities

Enlist existing QA, dev, and ops responsibilities. Include AI-relevant functions: strategy definition, automated test design, data management, and others. Gaps here often explain why AI pilots fall flat.

Step 2: Let your team learn

Begin with targeted learning: teach them prompt engineering for GenAI tools, train dev leads in reading AI-generated coverage maps, let non-techies vibe code. Then, you can proceed to broader, mindset-changing learning. Skills > headcount.

Step 3: Start small and move by iterating

Form a cross-functional pod with clear owners for test design, AI oversight, and tool maintenance. Run a single high-impact use case (e.g., automating post-deployment smoke tests) before expanding to full regression coverage.

Step 4: Choose tools that match your team’s capacity

Select platforms with automation-ops fit. Check how OwlityAI minimizes infrastructure overhead and lets smaller teams operate at enterprise-level velocity without puffing up complexity.

How OwlityAI empowers modern QA teams

Sounds suspicious, but the secret sauce is to open your mind. Without the readiness to absorb new information and ditch biases about AI effectiveness, you’re doomed to failure. Here is how OwlityAI breaks those biases in practice.

Cuts heavy scripting and large-team dependency: Traditional QA automation be like: week-long manual scripting, constantly expanding the team to keep all the processes effective (but not that efficient, innit?). OwlityAI’s adaptive model auto-generates and updates tests so that you don’t struggle with the mentioned.

Pinpoints high-risk areas timely: Many teams waste cycles testing low-impact areas while critical defects slip into production. Our tool has risk-based prioritization that analyzes code changes, usage data, and defect history to direct testing where failures are most likely.

Keep QA, dev, and product on the same page: Misunderstanding and misalignment are the poison of the 21st century. This delays releases and causes duplicated work. OwlityAI solves this with collaborative dashboards with test coverage, execution status, and defect impact. Updated in real time.

Bottom line

Mapping the QA team structure for AI is challenging. Not because it’s sophisticated rocket science, but because so many companies just don’t know all the options for tuning the right AI tool, streamlining clear processes, and revising the testing stage along the way.

Not sure what roles in end-to-end AI testing you need? Book a free 30-min call with our team, and let’s see how we can build and run scalable, AI-powered workflows with fewer resources for your company.

Monthly testing & QA content in your inbox

Get the latest product updates, news, and customer stories delivered directly to your inbox