What AI can’t do in QA and why you still need people

Dmitry Reznik

Chief Product Officer

Summarize with:

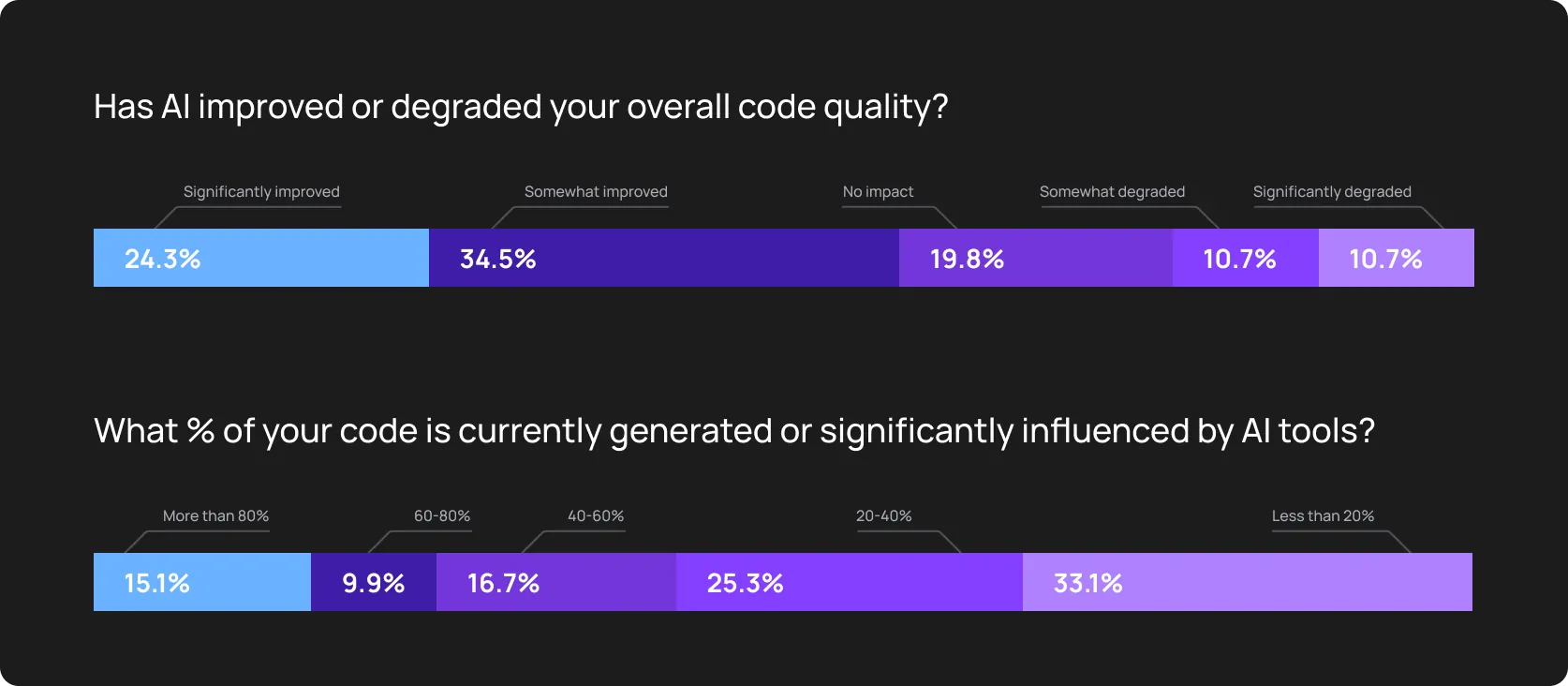

76% of those surveyed by Katalon are using AI testing tools. But what is even more interesting is that 56% of QA teams struggle to keep up with modern testing demands.

The icing on the cake: “AI will replace testers and devs” suspicion is gaining ground extremely fast. Looks like people don’t really understand what AI is good at and where it breaks down.

Put differently, AI implementation doesn’t mean quality improvement.

Two hot takes from the very beginning:

- Any modern AI-powered tool has its A-game at repeatable, structured, data-heavy tasks: pattern detection, classification, prioritisation, and high-volume execution.

- What AI can’t do in QA is judgment, context, trade-offs, and connecting tactical moves with strategic product intent.

Another niche report indicates that 44% of engineering leaders cite missing relevant context as the main flaw of AI in QA.

You might be surprised, but look up several AI-related reports. About coding, software testing, decision-making in engineering, whatever. You’ll likely notice one common thing: the “AI testing vs human testers” perspective is quietly replaced by “has AI made you happier and your work easier?”

This article follows the same logic. We substitute the replacing positioning with a cooperating one. Because the most effective teams combine AI automation with human judgment to deliver reliable and safe software.

The myth of fully autonomous QA, and why it’s not real (yet)

Marketing often gets ahead of engineering reality. “Zero-human intervention” and “100% autonomous” are the most commonly cited features on product pages.

However, the “push-button” solution doesn’t exist yet.

While more than 50% of global companies are using AI for routine tasks in testing, the “fully autonomous” fairy tale seems ridiculous when we look at the complaints about real value.

Why is a fully autonomous AI tool a myth

- AI ≠ understanding business logic: When there is a well-defined flow or even a checklist, modern tools can even outperform in an “AI vs human decision-making” competition. Yet, it doesn’t know that for your specific testing one-pager, a user shouldn’t be able to apply a visible 5% discount within a section. This is why predefined rules and patterns matter so much.

- AI ≠ understanding user expectations or UX: AI can detect if an element is present in the DOM. It can’t tell you if that element is positioned so poorly that it frustrates a mobile user. It also can’t tell you your design makes the user suspicious about your offer.

- AI ≠ understanding long-term vision: Yu may plan to pivot from a subscription model to a usage-based one. Joe from your testing team will prepare the suite for this transition, and AI won’t (if you don’t prepare a specific knowledge base for it).

Even the most advanced tool doesn’t understand objective correctness or adequacy. It only understands consistency and whether the current logic matches predetermined parameters.

Let’s break down the obvious and not that apparent autonomous testing limitations.

What AI can’t do in QA and why it matters

Neural networks can process loads of data points, but they can’t precisely guess the “mental model” of your business without your repetitive input. And this gap between execution and understanding can backfire one day.

1. AI can’t understand product intent

Any technology has its roadmap. The first step of almost any is understanding “what the situation IS”. Only after advancing in this art can the technology forecast “what it SHOULD BE”.

Sounds weird, but we cut nuances and simplify intentionally.

In real products, testers constantly answer questions like these:

- Should this user flow exist, or can we cut it out without harming functionality/clarity

- Does this calculation make sense for the business model?

- The app behaves unexpectedly. So is this a problem of inadequate requirements, or did we break code accidentally?

Machine learning algorithms can also decide if a slight delay in performance is acceptable to ensure 100% data consistency during Black Friday, for example. But this requires the extra mile from your end: more time and money.

2. AI can’t prioritize risk like humans

Machines see the world through mathematical lenses. To an AI, an inactive contact form and a 500 error on the Buy button are the same — just failed tests. For this, there are two aspects:

- Revenue risk: Human testers understand that a failed payment is a stop-the-release event, and clumsily aligned dividers on the About page are just a 5-min fix.

- Customer pain: Humans can predict which bugs will cause the most vocal backlash on social media or lead to a spike in support tickets.

3. AI can’t identify usability problems

In the battle of AI testing vs human testers, there is an important “round” — usability. It’s more emotion and ergonomics than logic and analysis (a bit of it as well, though).

The algorithm scans for accessibility tags (e.g., ARIA labels), but it won’t tell you if the navigation flow is “clunky” or if the microcopy is arrogant for some nationalities or social strata.

Modern tools can misinterpret user hesitation. For example, second-language issues in fintech apps, where unclear copy on a button affects payment or another important action.

At the same time, missing alt-text or UI-location problems are not rocket science for autonomous tools.

4. AI can’t validate complex domain-specific logic

Take the healthcare, medtech, or fintech industry. They all have strict regulations: HIPAA, SOX, GDPR, etc.

This is why, in over-regulated niches, in addition to the top-performing functionality, vendors should consider different intents of the auditor and forecast them when developing testing suites.

- Regulatory due diligence: If the model under the hood of your testing tool doesn’t have ongoing law shift tracking, it’s a sign to carefully review AI’s outputs. For example, in late November 2025, the European Commission introduced adjustments to the AI Act, postponing some parts to 2027. At the same time, human testers (+ backed by lawyers) ensure the tool doesn't just work, but stays legal.

- Multi-tenant complexity: Human testers understand the “ripple effect” in SaaS projects. A planned change for customer A might unexpectedly break a configuration for customer B.

5. AI can’t resolve unclear failures

Even the starting XBOW’s breakthrough to the top-1 position at HackerOne has shown that over half of the autonomous findings required human validation. So, even the most advanced tools can sometimes lose their bearings in an app’s intricacies.

The role of testers in AI era is more about vision and investigation:

- Is the spotted failure a real defect or a flaky one?

- Did the environment drift or configuration change?

- Is the behavior correct but poorly documented?

So, basically, the human’s leg-up over machines is not only creativity but also critical thinking.

6. AI can’t own accountability or quality decisions

Caveat: It can decide. But it can’t really own the decision. “Yes, you’re right. I was mistaken. Do you want me to…” — we’ve all been there with GenAI models.

And this is partially applicable to AI in QA. The tool just blocks release, and would you fight it?

High-level release readiness is your responsibility: weighing developer fatigue, market timing, and known technical debt — too many for AI’s plate, but an excellent fit for yours.

7. Communication is one of the strongest AI QA limitations

As we’ve already stated, product quality is a shared responsibility. That’s why we are not about to exaggerate if we state that quality is 80% communication. Answer the Project Manager’s “so what’s the status”, elaborate with the Product Manager about requirements, and talk to developers on edge cases.

8. AI can’t maintain institutional memory

The training data is the foundation of the AI model… and its the biggest limitation. In most cases, modern tools don’t remember reasons for failed tests.

OwlityAI, for example, understands the reason behind the failure and learns from such patterns, improving over time.

What AI should do so that you don’t waste time

Despite all the limitations of AI in software testing, there is a clear benefit from using this technology. It saves time on repetitive tasks, it saves money where human efforts become too expensive to scale.

Below are 7 scenarios where AI delivers.

1. Repetitive regression testing

Autonomous testing tools generate, execute, and continuously update regression suites, whatever their size. And that’s all across browsers, devices, and configurations.

Where humans are stuck:

- Running the same checks release after release — this blurs their judgment

- Keeping coverage consistent — it’s difficult under fast delivery cycles

What AI delivers:

- High-volume execution

- Consistent validation regardless of environments

- Parallel runs that drastically shorten feedback loops

2. Self-healing test maintenance

Maintenance death spiral kills. Humans spend hours updating XPaths because a div ID changed.

- What AI delivers: OwlityAI and similar tools use computer vision: if the ID changes, they look for the element’s text, relative position, and shape. We call it healing, it’s when the tool corrects the script during a runtime and simultaneously updates the repository.

- Gain: Up-to-date repo, healed UI elements — and a couple of hours for your team saved.

3. Flakiness resolution

Some tech professionals (even middle+) often think flakiness is a binary problem, while it’s more data science one.

- What AI delivers: Checks 100-1000+ previous runs and detects even correlations and automatically quarantines suspicious tests or applies dynamic retries.

- Gain: Trusted CI pipeline. Strong support for the dev team.

4. Log and failure analytics

Classifying failed tests is a crucial part of the QA team’s work. And this is where an AI testing vs human testers battle turns into a one-horse race.

What AI delivers: OwlityAI, for example, uses clustering algorithms (like Levenshtein distance on stack traces) to group all failures into specific root causes (gateway timeout, missed locator, logic bug, etc.). Way faster than humans; additionally, the model learns from your app, and every cycle becomes even faster and more efficient.

Gain: The tool significantly shrinks one of the key metrics — time to diagnosis.

5. Coverage expansion

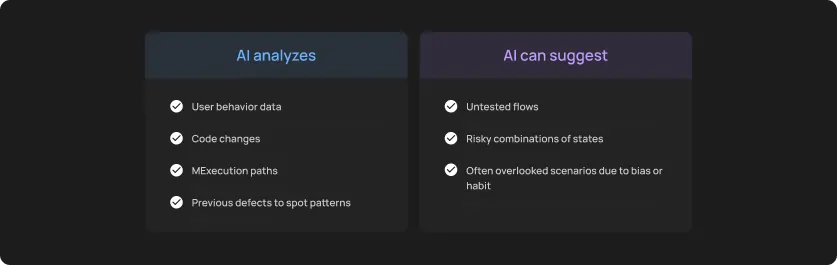

We can’t help but remind you what AI can’t do in QA. But now, from another end, what it can for what reason.

Note: AI doesn’t decide what matters most, but rather what was missed.

6. Generates scenarios with way larger amount of data

If you put enough effort to structurize the training dataset, your tool will likely output:

- Boundary values

- Rare combinations

- High-cardinality datasets

Especially valuable in billing or multi-tenant logic.

7. Continuous monitoring

AI operates 24/7/365:

- Watching production signals

- Comparing expected vs real behavior

- Detecting drift between test and live environments

This makes QA a continuous system that handles everything humans alone can’t sustain.

The optimal model: people + AI working together

The best choice as of 2026 is a so-called human-in-the-loop testing, where a QA Engineer strategizes, decides, and directs, and AI executes and maintains. Let’s break this down into areas of responsibility.

What humans focus on

- Strategy and architecture: Test pyramid, deciding which flows require E2E coverage and which require unit testing, definition of done.

- Risk evaluation: Crisis planning: what we are going to do if the broken feature or data breach happens. Prioritizing testing efforts based on business severity.

- Requirements engineering: Translation of PM requirements into clear tasks.

- Judgment and trade-offs: Deciding to ship with known non-critical bugs to meet a market window.

- Situations where contextual knowledge makes the difference: Crafting multi-stage tests that mimic specific user behaviors: plan upgrade, downgrades, request a refund, etc.

- Non-functional quality: Evaluating performance, usability, brand voice, and accessibility through the user lenses.

- Release decisions: Interpreting the tool’s data to make the final go/no-go.

What AI focuses on

- Repetitive tasks: Running the same regression suite 300 times a day.

- Execution: Managing parallel cloud infrastructure, spinning up containers, and executing tests across 20 browser versions simultaneously.

- Maintenance: Automatically updating locators and healing scripts.

- Pattern recognition: Scanning logs for anomalies, detecting timing drifts, and flagging flaky behavior patterns.

- Scaling without losing in quality and smoothness: Generating boilerplate code for thousands of API endpoints.

How to structure a QA team in the AI-driven era

If you ask us what the main shift the founder or CTO should go through before introducing autonomous software testing, we’d prefer a change from “AI vs human decision-making” to “AI plus human decision-making”.

The most successful companies are moving from a binary perception of modern technologies to hybrid models where humans and technologies complement each other.

Your blueprint for an AI-augmented team

- Quality Strategist: The primary decision-maker, who defines what needs testing based on business risk, user analytics, and product roadmaps. Their core responsibilities:- Defines risk- and roadmap-based testing strategy

- Prioritizes flows

- Aligns those priorities with product and revenue goals

- Owns release readiness criteria

- AI Testing Supervisor: SRE for intelligent testing; is responsible for managing the fleet of autonomous agents. They configure the AI’s parameters, tune its sensitivity to UI changes, and oversee the self-healing logs.

- Testers validating AI output: Reviews AI-generated scenarios for logic gaps, ensuring the machine hasn’t hallucinated a passing test for a broken feature.

- Automation Lead: Handles 20% of complex, multi-system integration tests that require custom coding and deep architectural knowledge.

- DevOps Engineer: Ensures the CI/CD pipeline is robust enough to handle the high volume of parallel tests generated by AI. Their core responsibilities:

- Integrates AI tests into CI/CD

- Maintains execution environments

- Owns observability, stability, and performance of test runs

- Ensures fast feedback

Workflows for AI QA governance

Prevent chaos in AI adoption with the following five governance processes.

- Review cycles: Check AI-generated scripts exactly like you check code from a junior developer. Challenge them before merging into the main regression suite.

- Clear risk prioritization model: You’d better have a human-defined framework that tells the AI tool which parts of the application have the highest priority over the low-priority ones. OwlityAI has a smart algorithm that helps QA assign precise priority; yet, it’s still better to trust but verify.

- Test observability dashboards: Set up a single “source of truth” for your testing metrics — the dashboard with coverage, stability trends, time shifts, general efficiency, and (ideally) ROI of an AI-augmented approach.

- Hybrid manual + AI exploratory testing: A dedicated timebox where AI scans for structural regressions while humans perform creative exploratory testing.

- Release quality reviews: A final human sign-off: AI provides the data (the mentioned dashboard), and the Quality Strategist makes the go/no-go decision.

When relying on AI too much becomes dangerous

Artificial intelligence is a powerful accelerator, but it’s high time to change the “set it and forget it” perception. We shouldn’t let modern technologies create a false sense of security and let them take over our jobs (calm down, just joking).

Yet, over-relying on any automation tools is probably more dangerous than having no automation at all.

The visible risks of overtrust

- AI masking real bugs: A self-healing agent might fix a broken selector on a button that is actually visually covered by a pop-up. The test passes, but the user is blocked…and you just have lost a potential customer.

- Green dashboard trap: When teams forget the limitations of AI in software testing and stop checking suites because “OwlityAI handles it”, things might go wrong.

- AI misinterprets newer flows: When a feature drastically changes, the AI tool might flag the new behavior as a bug or try to force the old path; that’s how most of the current models work.

- Teams losing product context: If your QA team surfed the app a couple of months ago, it’s a sign to cut their salary to emphasize the influence of their work on the final product.

The hidden risks of overtrust

- Feedback loops amplify wrong assumptions: AI trained on flawed expectations will confidently repeat them.

- Exploratory skills worsen (personal risk): When testers stop exploring, they stop learning and decrease their value as professionals.

- Late discovery of systemic issues: AI often optimizes locally while missing cross-system failures.

Bottom line

More and more companies uncover the real limitations of AI in software testing. And the current question isn’t “will AI replace testers”, but “what’s the role of testers in the AI era”.

And as of 2026, the answer is clear — testers become architects, and AI is a multiplier. Modern tools automate maintenance, routine execution, and pattern matching, while humans can ascend to their true value: providing strategic insight and assessing business risk.

OwlityAI uses AI to automate the heavy lifting of regression and maintenance. If you are ready to level up your role, book a 30-min call with us.

Monthly testing & QA content in your inbox

Get the latest product updates, news, and customer stories delivered directly to your inbox