How AI understands business logic inside complex applications

Dmitry Reznik

Chief Product Officer

Summarize with:

Imagine a neobank app.

We created an automated script: Click element #transfer-btn, type 500, click #submit.

If the transfer button moves to a sidebar or the daily limit logic changes to reject USD 500 transfers for new accounts, the script fails. It simply doesn’t know what a transfer is; it only knows x,y coordinates and DOM IDs.

And such underdevelopment often ruins the product, because complex application testing requires ongoing script maintenance and many other routine actions. In turn, this devours more time and attention from your best specialists.

On the contrary, modern software testing tools give back this time, memorizing steps and inferring intent, which allows them to take over the routine.

In our example, the modern tool would understand that a transfer flow requires a source, a destination, and a validation step.

And this example leads us to the main question of this article — how does AI understand business logic?

This guide breaks this question down.

First, what do we mean by “business logic understanding”?

When we’re talking about understanding, we don’t mean that an AI model literally knows the business goals in the way a product manager or domain expert does.

At the moment, AI doesn’t have intention, awareness, or strategic insight (in a common sense). Yet, modern AI tools process signals from several app layers simultaneously and, this way, can infer structured behaviour, relationships, and constraints that reflect business logic.

This is what we call “AI understands business logic” — a pattern-driven form of understanding derived from execution histories and semantic relationships among actions and outcomes.

AI in QA infers

- Which actions matter: The previous log allows the machine to distinguish the noise from relevant clicks or API calls.

- Which flows represent real user behavior: Previous log + internal benchmarks + ongoing analysis of user behavior.

- Which steps depend on others: Conditional branches like address validation only after shipment selection.

- What data is valid and what is not: Format, ranges and limits, specific constraints like valid VAT numbers.

What the user wants: Modern autonomous testing tools analyze typical user flows inside your app and spot potential roadblocks in their way: where the interface may seem intricate and where the button may not give its best on the right-hand screen corner.

Examples of semantic AI testing

- Authentication rules: You may have certain “protected” routes (like /dashboard/settings), and AI gets that — it inserts a login step without hints from your end in any test targeting these protected pages.

- Mandatory fields: AI understands and constantly learns which inputs are optional and which are required by observing 400 bad requests when fields are left empty.

- Data flows: The AI attempts to input negative values into a quantity field. If the system accepts -5 items, the AI flags a logic defect because it understands that e-commerce quantity > 0 is a learned constraint the tool noticed in historical data.

- Checkout workflows and multi-step wizard flows: Modern testing tools smoothly operate data from different promo campaigns and apply them in a checkout flow (promo code usage, for instance). It also understands wizard flows: we broke down onboarding into 4 smaller steps to ease off the process for a user, but AI perceives it as a cohesive unit. If a developer reorders Step 2 and Step 3, the tool adapts the test execution order rather than failing, because it understands the goal is complete onboarding, and not testing separate buttons/fields.

It doesn’t seem like guesswork, does it? The lion’s share of user actions is a pattern, and AI recognizes them fast and at scale.

How AI actually understands business logic inside complex applications

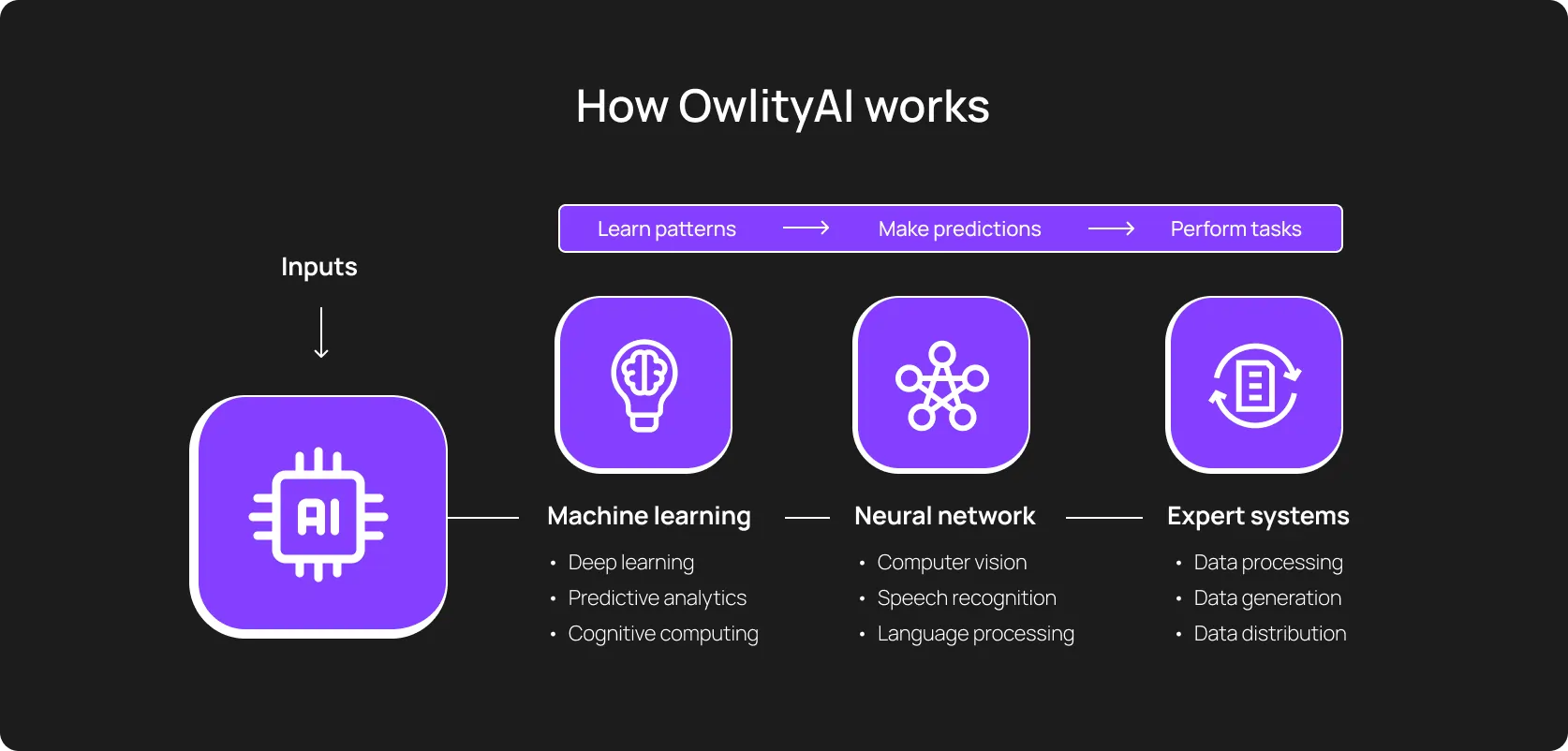

Let’s sidestep from software testing for a while and talk about what we mean when we’re talking about machine thinking, inference, and decision-making.

The standard setting for machines is to do exactly what they were told. AI, in turn, has its game in probabilistic judgments: it figures out how to achieve a goal based on all the data and inputs it has. It builds a mental model of your app by ingesting seven distinct layers of data.

1. AI analyzes user interface semantics (visual + structural)

Artificial intelligence has a leg up on humans in the amount of data it can process and the processing speed. It goes beyond typical visual correlation with your style guide and documentation; it reads structure and meaning of all labels, headings, input types, proximity, grouping, and hierarchy.

A “Submit” or “Confirm” action that sits at the end of a grouped form is not just another button; it is a state transition. Repeated patterns such as form → submit → confirmation message tell the system where a business action begins and where it ends.

- Visual hierarchy: The bottom position of a high-contrast button is likely the submit or purchase action, so the model distinguishes it from a cancel button, just because it’s logically located. Repeated patterns (for example, form → submit → confirmation message) tell the system where a business action begins and where it ends.

- Accessibility tree: Artificial intelligence reads the Accessibility Object Model to understand the purpose of different elements — even if an element doesn’t have a clear ID, it can figure out that it acts like a button.

- Form logic: It scans input types (type=“email”, type=“password”), then infers the data constraints before the typing itself. A good example is a CVV field next to a Card number. For a properly trained model, it’s clear that there should be up to 4 digits.

2. AI scans behavior and recreates user flows from it

The real learning process goes beyond UI semantics and is quite human-like: the system takes specific actions, observes the results, and adjusts its actions. The gap between the ideal result and the real result guides the model.

Over time, this results in a behavioral map, with success states (an order confirmation, a saved profile, etc.) and validation/permission errors.

3. AI uses history

With [XX] of analyzed executions (depends on your project and initial data intake), the tool has a sufficient “argument base” to decide.

- Stability profiling: Tools like OwlityAI build a statistical profile for every element. If the Load more button typically takes 200ms to render but occasionally takes 2ish secs, the tool adjusts a dynamic wait time.

- Drift detection: OwlityAI notices gradual changes: The Save button moves slightly to the left every release → it memorizes this drift. If it suddenly disappears or moves 500px, OwlityAI flags it immediately.

This is how AI distinguishes a logical, yet expected behavior from a broken path (even subtly broken).

4. API responses teach AI

AI-powered testing tools collect and analyze what the system says back to the tool’s actions: HTTP status codes, validation messages, and response timing.

This business logic automation allows the tools to learn which data is accepted, which actions require authentication, and what a valid system state looks like — 403 after a certain action teaches permission boundaries.

5. AI correlates data relationships across components

Really complex software never operates in isolation, so other parts of the system are also affected by a change in a small “app’s corner”. When executing complex application testing, artificial intelligence takes this into account and correlates shared data across all other “corners”, places, and connected software.

- Cross-component ties: Microservices require comprehensive causality spotting. For example, if the dash is empty after creation, AI knows the integration logic failed, even if both UIs are loading correctly.

- Shared state recognition: If the model generates an order ID #12345 during checkout, it expects to see #12345 in the order history.

6. AI monitors execution context to infer intent

Deep contextual knowledge has always been one of the most significant human advantages over technology.

- Page purpose

- Flexible layout, depending on the traffic source (when using a web app)

- Element grouping, considering users with disabilities alongside other user strata

AI comprehends these cues, too, through semantic understanding in QA. With a difference, though: intentions in the AI’s mind depend on the history of completed flows over a specific time.

Let’s go back to the neobank example from the beginning. The main button (e.g., making a large sum transfer) should be treated differently from a secondary action. For this reason, the tool scans CRUD operations, onboarding wizards, and transactional confirmations to understand a prior that shapes interpretation.

Not just what happened, but what was supposed to happen — but these are previous actions that determined AI’s understanding.

7. Selectors → semantic understanding

Traditional automation clings to selectors, and a small move entirely breaks the flow. This is a key difference from AI test automation. AI combines natural language understanding, visual cues, and structural context to recognize components by purpose.

A “place order” action remains the same logical step, even if the layout shifts or the underlying DOM is refactored.

Selectors are still there, though, but become just one of many other signals in the element profile.

Where AI excels at understanding business logic

Mapping complex user journeys at scale

Humans are irrational, they often don’t understand their own behavior. This forces developers to map all possible user paths, and this is where AI excels.

It maps thousands of executions across environments: happy paths, alternate routes, retries, partial failures, abandoned journeys.

Large products benefit the most, because AI spots shadow flows: combinations of steps that are technically allowed, frequently used, but never formally specified.

Detecting hidden dependencies between components

Modern systems are full of implicit coupling:

- Change in profile data affects checkout

- Permissions tweak breaks reporting

- Backend validation change subtly alters UI behavior three screens later

AI sees these chains and produces a dependency graph over time.

Identifying regression risks

Traditional regression testing is binary: pass or fail. AI-driven regression testing recognized different gradients: where code changes can blast under what conditions.

If a flow that was stable for hundreds of runs suddenly shows different response patterns, timing, or outcomes, OwlityAI flags risk despite the passed test. Because it has noticed potential hard failure.

Note: The model needs robust data (including a baseline) and historical logs.

Handling dynamic, ever-changing UIs

Sometimes, minor redesigns = weeks of work drained in a sec.

AI anchors tests to intent and semantics, making the testing system resilient to layout shifts, renaming, and component refactoring.

A checkout flow remains a checkout flow even when the design team has a creative afternoon. And well, we are irrational as we said before, and if the CPO wants that button to be more “buttoner”, OwlityAI understands and doesn’t judge.

Recognizing valid vs. invalid states

What’s a “normal” state? At the bottom of it, AI doesn’t know what the normal really is, but it memorizes the normal you fed to it:

- Response codes

- Messages

- State transitions

- Timing form patterns

The tool memorizes inputs, organizes them, and notices deviations.

Humans might miss soft failures in manual runs, and scripts often don’t assert deeply enough.

Predicting where bugs are likely to occur

OwlityAI and similar tools analyze historical commit data, test failures, and identify hotspots, modules with high cyclomatic complexity and frequent churn.

The tools understand that the tax calculation engine is logically fragile because it has broken 14 times in the last year.

It’s very similar to Senior dev’s gut feeling, but with data to back it up.

Where AI still needs humans and why

Despite its processing power, AI suffers from the Oracle Problem: it can determine if the software is consistent, but it can’t determine if the software is right.

1. Understanding domain-specific rules

As we stated above, any AI model needs robust data, including domain rules and contextual knowledge — what works in the industry and what doesn’t, who leads the game, and what are the Dos and Don’ts under specific conditions.

Healthcare, finance, logistics, and other highly regulated environments have many restrictions, rules, and laws — and even with all that, the system sometimes behaves otherwise. This is especially true for ethical boundaries and emotional intelligence.

2. Interpreting ambiguous behavior

Interestingly, modern AI models operate way more successfully under strict rules (read, patterns). HIPAA in healthcare or Basel III in fintech define industry business logic.

Even though popular models usually encode these as constraints, responsibility for correctness remains on humans.

Bug or a feature — that’s the question. If a system allows an action, should it? If a flow succeeds but feels wrong, how to double-check? That judgment remains human.

3. Risk prioritization

The core thought: different issues have different importance, and humans decide what’s risky in a different way than machines.

- A broken admin report isn’t that important when there is a broken payment flow in the app

- Machines assess risk mathematically, humans — comprehensively, including the emotional side

AI spots instability, but these are humans who understand the actual impact of this instability on the user.

4. Setting acceptance criteria

AI lives in the existing world. It can’t fully imagine what the world will be “if we meet specific criteria”. And this is actually what the criteria define — the future, a better state of things.

Humans translate product intent into concrete expectations: what counts as success, what is acceptable degradation, and what is explicitly forbidden.

This comes down to what we’ve already reiterated several times — robust data for model training.

5. Defining meaning behind data

AI understands business logic as a set of structures, correlations, and anomalies. And we see purpose.

A number change might be correct in one context and catastrophic in another. If you provided the holistic overview of your product, your industry, and all potential scenarios, that’s helpful.

But this often isn’t so. Sometimes, there is not even a hint at why the testing system exists.

6. Handling edge-case domain knowledge

Heritage is an inevitable part of a robust testing system (actually, any project):

- Never do [X] during end-of-month processing

- This field exists only because of a customer from 2017

- Never flag a user who found us on IG and didn’t like a single post — just delete its orde…

The last one is exaggerated a bit, but once again, more clearly, AI has no institutional memory unless you teach it. These edge cases live in people’s heads, old tickets, and war stories.

A practical framework for using AI to model business logic

Сomplex application end-to-end testing requires a distinct role divergence: where is human responsibility, and what scope AI can handle under what conditions.

Step 1: AI maps all user flows

Give the testing tool access to your staging environment with a valid set of test credentials. As an option, create a production-like environment and enable the agent’s navigation, authentication, and core actions.

Allow the agent to crawl the application autonomously. In this phase, the model explores every clickable element, form, and navigation path.

The result should be a comprehensive visual map of your application’s actual behavior.

Step 2: Identify the critical business paths

Your turn. You should separate the wheat from the chaff and prioritize paths by:

- Revenue impact: Checkout, subscription upgrade, etc. — flows directly impacting profit or churn

- User retention: Core functionality and features that cause churn if broken

- Compliance and brand risk: Flows involving data privacy or regulated calculations

Step 3: Size up the tool’s assumptions

The software tool will unite the collected flows into logical units. Review them.

Tip: Treat the flows you didn’t think about as just hypotheses. Either confirm them against the product or explicitly mark them as invalid to prevent quiet hallucinations.

Step 4: Add human context

It’s high time you inject domain-specific rules that an outsider wouldn’t know: constraints, must-do actions, numbers. For example, a user with the “student” role must never see the premium feature.

Step 5: Monitor changes and detect drifts

You have an established logic. Now, give an autonomous testing tool a baseline of normal behavior.

Alongside typical failures, the tool should flag business inconsistencies: a checkout process taking 30% longer or an extra step appearing in the signup flow.

Step 6: Combine AI tests + human oversight

The finish line: integrate these verified AI tests into your CI pipeline. The testing tool handles the repetitive execution and self-healing, while your team reviews weekly reports and adjust strategy.

And OwlityAI goes the extra mile here since you don’t need experienced SDETs or ML Engineers to operate with it. Intuitive interface and the real AI under the hood help navigate effectively even without proper testing mastery.

But let’s have a minute to dive a bit deeper into it.

How OwlityAI interprets and stabilizes business logic

In a nutshell, it’s semantic understanding in QA at its best: OwlityAI builds a multidimensional graph of your app, ranking the importance of every component by its purpose.

- UI scanning: The accessibility tree, visual hierarchy, and natural language labels help the tool understand that a green circle icon represents a transition to a support bot in a popular messenger.

- Flow mapping: The tool autonomously visualizes exactly how users traverse between microservices and identifies coverage gaps.

- AI test generation: OwlityAI tracks how the app and the users behave and generates end-to-end test scenarios that reflect it.

- Self-healing intent preservation: After the selector broke, OwlityAI re-evaluates the page to find the element that best matches the original business intent. Then, heals the test execution on its own.

The icing on the cake is that the in-built analytics connects tests to revenue: you can trace a dependency between broken paths and the app performance.

Bottom line

New technologies have always scared humanity first. AI is not an exception.

Another side of this coin is that the early adopters have been more successful than Homunculus loxodontuses.

Semantic AI testing can either ease your work and change your game or disappoint you entirely (because you didn’t learn how to use it properly).

Yet, genuine business logic automation doesn’t happen when you finally find that Money Button. It happens when you guide the strategy, allowing the autonomous tools to take over the routine and adapt to changes in the blink of an eye.

Monthly testing & QA content in your inbox

Get the latest product updates, news, and customer stories delivered directly to your inbox